Forward-looking: First they arrived for the art, chances are they arrived for the text and garbled essays. Now they’ve been coming for songs, by way of a “new” machine discovering algorithm that is adjusting picture generation generate, interpolate and loop music that is new and genres.

Seth Forsgren and Hayk Martiros adapted the* that is( (SD) algorithm to songs, making a brand new sort of strange “music device” because of this. Riffusion deals with exactly the same concept as SD, switching a text prompt into brand-new, AI-generated content. The main disimilarity is the fact that algorithm was especially trained with sonograms, that may depict songs and sound in aesthetic form.

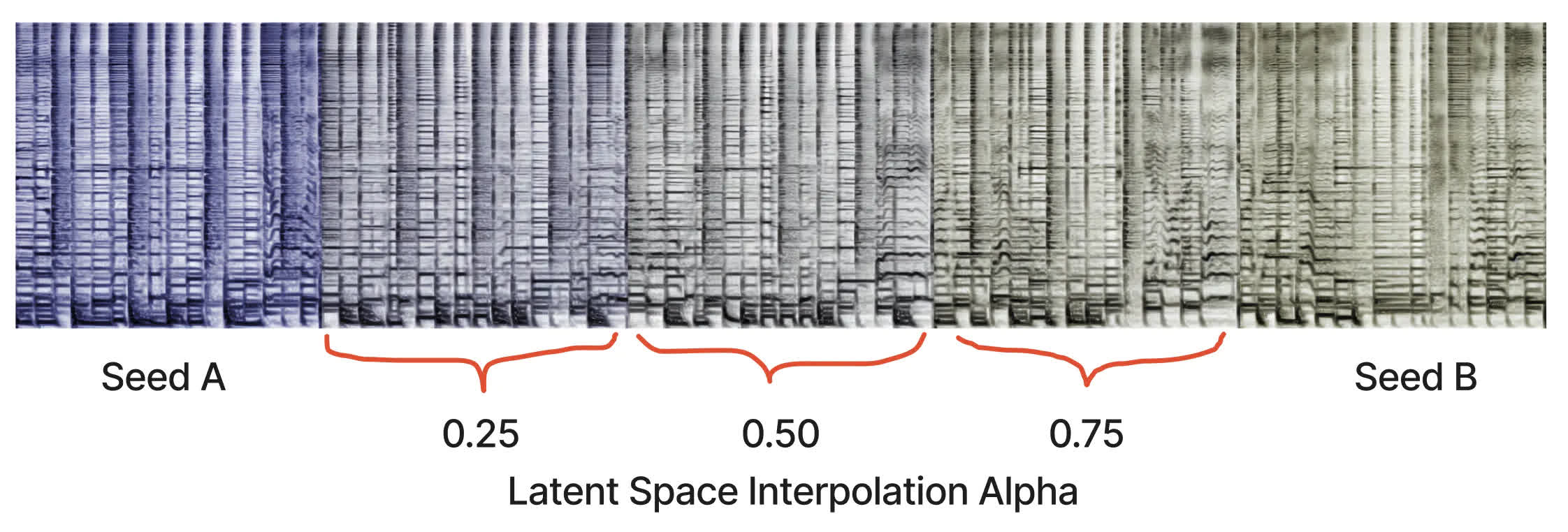

As explained on the* that is( website, a sonogram (or a spectrogram for audio frequencies) is a visual way to represent the frequency content of a sound clip. The X-axis represents time, while the Y-axis represents frequency. The color of each pixel gives the amplitude of the audio at the frequency and time given by its row and column.

Riffusion adapts v1.5 of the* that is( artistic algorithm “with no alterations,” only some good tuning to raised procedure photos of sonograms/audio spectrograms combined with text. Audio processing occurs downstream of this design, although the algorithm can generate infinite variations also of a prompt by varying the seed.

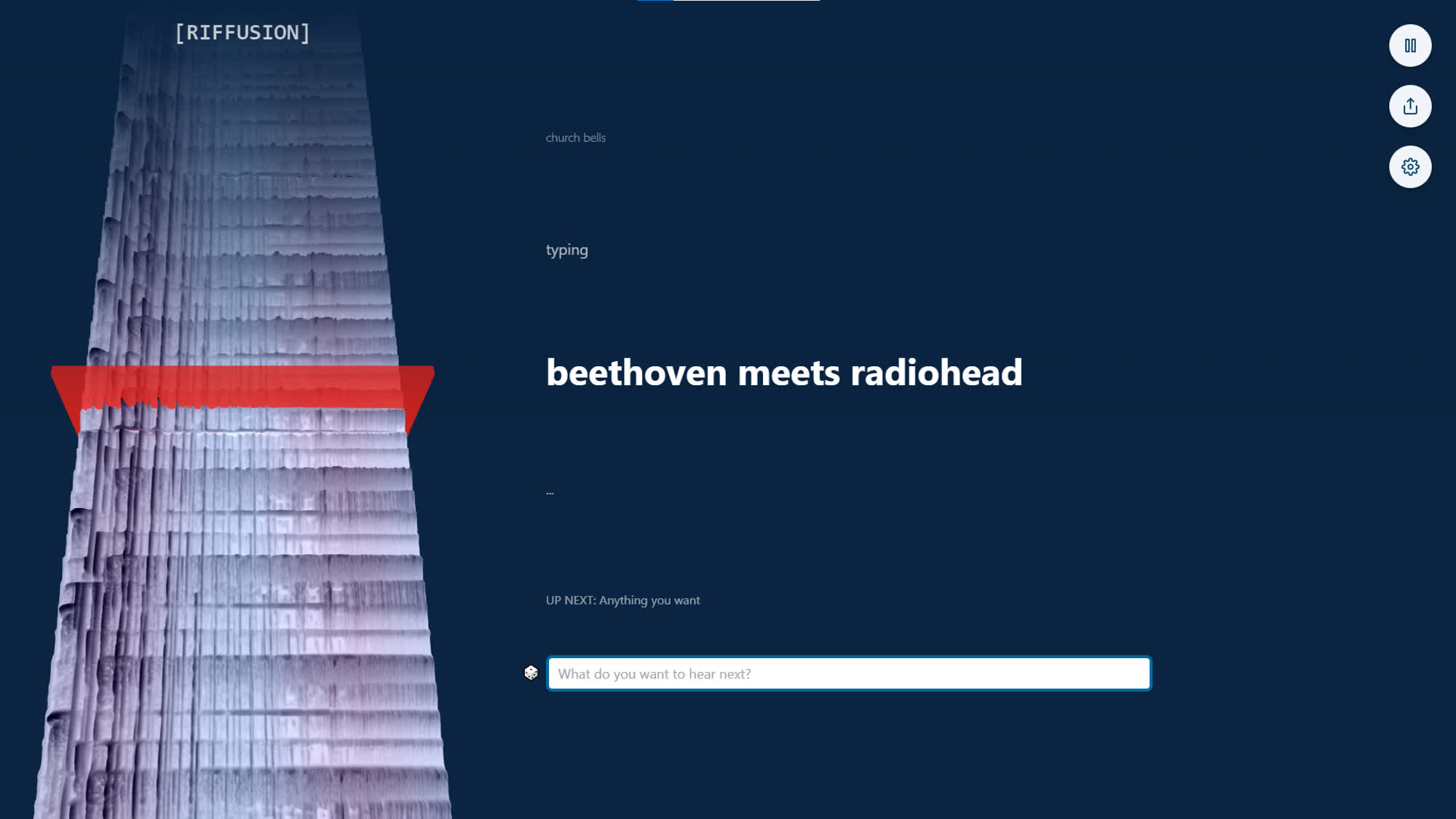

After generating a sonogram that is new Riffusion transforms the picture into noise with Torchaudio. The AI happens to be trained with spectrograms sounds that are depicting songs or genres, so it can generate new sound clips based on all kinds of textual prompts. Something like “Beethoven meets Radiohead,” for instance, which is a nice example of how otherworldly or machine that is uncanny formulas can act.

After creating the idea, Forsgren and Martiros place it altogether into an interactive internet software where people can try out the AI. Riffusion takes text prompts and “infinitely makes content that is interpolated real time, while visualizing the spectrogram timeline in 3D.” The audio smoothly transitions from one clip to another; if there is no new prompt, the app interpolates between different seeds of the same prompt.

Riffusion is built upon many source that is open, specifically Next.js, React, Typescript, three.js, Tailwind, and Vercel. The software’s signal features its own* that is( repository as well.

Far from being the audio-generating that is first, Riffusion is just one more offspring of this ML renaissance which already generated the introduction of Dance Diffusion, OpenAI’s Jukebox, Soundraw yet others. It will not be the very last, often.