Why it matters: An interesting article published at WikiChip covers the seriousness of SRAM shrinking dilemmas when you look at the semiconductor business. Manufacturer TSMC is stating that its SRAM transistor scaling has actually entirely flatlined to the level where SRAM caches are remaining the size that is same multiple nodes, despite logic transistor densities continuing to shrink. This is not ideal, and it will force processor SRAM caches to take up more space on a microchip die. This in turn could increase manufacturing costs of the chips and prevent microchip that is certain from getting no more than they might possibly be.

Nearly all processors depend on some type of SRAM caching. Caches behave as a speed that is high solution with very fast access times due to their strategic placement right next to the processing cores. Having fast and storage that is accessible significantly boost processing performance and end up in less squandered time when it comes to cores doing their particular work.

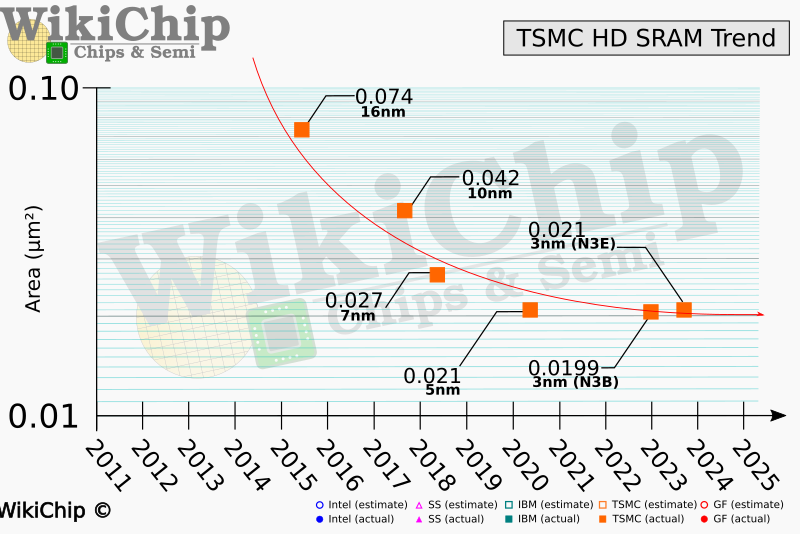

At the 68th Annual IEEE International EDM meeting, TSMC unveiled problems that are huge SRAM scaling. The company’s next node it is developing for 2023, N3B, will include the SRAM that is same transistor as the predecessor N5, used in CPUs like AMD’s Ryzen 7000 series.

Another node presently in development for 2024, N3E is certainly not that far better, featuring a measly 5% decrease in SRAM transistor size…

For a wider point of view, WikiChip shared a graph of TSMC’s SRAM scaling record from 2011 to 2025. The very first half the graph — representing TSMC’s 16nm and 7nm times — reveals exactly how SRAM scaling had not been a problem and just how it absolutely was shrinking in size in a pace that is rapid. But once the graph hits 2020, scaling basically flatlines, with three generations of TSMC logic nodes using nearly identical SRAM sizes: N5, N3B and N3E.

With logic transistor density still increasing at a pace that is rapid as much as 1.7x when it comes to N3E — but without SRAM transistor thickness following a exact same road, SRAM begins eating lots of die area in the future. Wikichip demonstrated this by having a hypothetical 10 billion transistor processor chip, running on a few nodes. On N16 (16nm), the die is huge in just 17.6percent of this die location consists of SRAM transistors, on N5, this rises to 22.5percent, and 28.6% on N3.

WikiChip also states that TSMC actually the manufacturer that is only similar problems. Intel has also seen noticeable slowdowns in SRAM transistor shrinkage on its that is procedure.

Unless this really is somehow treated, we’re able to shortly see SRAM caches eating up to 40% of the processor’s die area. This would result in processor chip architectures needing to be reworked and include to improvement costs. Another method producers might cope would be to reduced cache ability completely, which will lower overall performance. However, you will find alternate memory replacements becoming viewed, including MRAM, FeRAM, and NRAM, among others. But for the present time, it continues to be a challenge without any answer that is clear the instant future.